Inducing illusory ownership of a virtual body

http://www.ehrssonlab.se/pdfs/Slater-et-al-2009.pdf

-When the experimenter touched the real hand

of the subject with the Wand, the subject would

see the virtual ball touch the virtual hand, registered

in the same place on the virtual hand. In

this way synchronous visual and tactile stimuli

could be applied to the virtual and real hand

(Figure 1A). The asynchronous stimulation in

the control condition was achieved by using prerecorded

movements of the virtual ball. Using

this setup we compared the responses between

two groups of volunteers, with 21 participants

in the synchronous and 20 in the asynchronous

condition. The specifi c questions we used to

indicate the illusion were:

1. Sometimes I had the feeling that I was receiving

the hits in the location of the virtual arm.

2. During the experiment there were moments

in which it seemed as if what I was feeling was

caused by the yellow ball that I was seeing on

the screen.

3. During the experiment there were moments

in which I felt as if the virtual arm was my

own arm.

EXPERIMENT 2 – VISUAL–MOTOR SYNCHRONY

Having demonstrated that visuo-tactile correlations

can induce an illusion of ownership of a virtual

arm, we then explored whether this illusion

can be induced in the absence of tactile stimulation

– see also Dummer et al. (2009) and Tsakiris

et al. (2006). We carried out an experiment to

investigate whether the virtual arm illusion can

be induced by active movements of the fi ngers and

hand (Sanchez-Vives et al. in preparation with a

preliminary report by Slater et al., 2008b). There

were 14 male participants in this within-groups

counter-balanced experimental design. The illusion

related questions were:

1. I sometimes felt as if my hand was located

where I saw the virtual hand to be.

2. Sometimes I felt that the virtual arm was my

own arm.

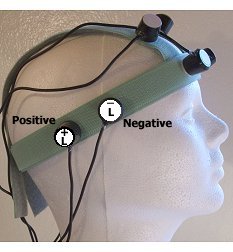

Here the participants wore a data glove that

detects hand and fi nger positions and transmits

real-time data to the computer that controls the

display of a virtual hand (Figure 1B). Only when

the movement of the virtual hand was synchronous

with the movement of the participant’s real

hand there was an ownership illusion. This was

indicated by questionnaire response (the two

questions above) and proprioceptive drift (using

the method introduced by Botvinick and Cohen,

1998). The fact the illusion could be induced by

active movements and congruent visual feedback

is important for virtual reality applications where

participants will need to interact with environmental

objects.

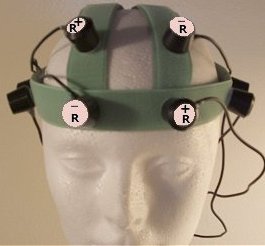

EXPERIMENT 3 – USING A BRAIN–COMPUTER

INTERFACE

We carried out a further experiment but without

any tactile stimulation or overt movements

(Perez-Marcos et al., 2009). Here the participants

had the task to open and close their virtual

hand through a brain–computer interface

(BCI). This used a cued motor imagery paradigm

(Pfurtscheller and Neuper, 2001) on which participant

had been previously trained (Figure 1D).

There were two conditions – in the synchronous

one the hand opened and closed as a function of

the participant’s motor imagery. In the second –

asynchronous – condition the hand opened

and closed independently of the subject’s motor

imagery. In the synchronous condition, but not in

the asynchronous, there was a sense of ownership

of the virtual hand. After the 5 min of BCI control

(synchronous or asynchronous) of the arm, the

virtual arm and table suddenly fell and the EMG

recordings showed that there was greater muscle

activity in the arm compared to an earlier reference

period before the arm fell – but only for

the synchronous condition. However, there was

no proprioceptive drift in either condition. This

may suggest that actual sensory feedback (touch

or proprioceptive feedback) is necessary for recalibration

of position sense and the elicitation of

a full-blown virtual hand illusion. Alternatively,

mental imagery may not be as potent in inducing

the illusion as actual stimulation. Future experiments

are needed to clarify to what degree virtual

limbs can be owned by BCI control alone.

THE VIRTUAL BODY

To what extent can the multisensory correlations

employed to produce the virtual hand illusion

generalise to the whole body? The evidence

is beginning to point towards an affi rmative

answer to this question – that the illusion of

ownership of a virtual body may be generated.

There is both indirect and direct evidence for

this. In Ehrsson (2007) a setup was employed

to give people the illusion that they were behind

their real bodies. Subjects wore a set of headmounted

displays that displayed real-time stereoscopic

images from two cameras located behind

where they were actually seated – thus shifting

their visual ego-center to behind themselves.

The experimenter was standing just behind the

participant and the participant could see where

they were sitting in the room and identify the

experimenter standing behind them just next

to them. The experimenter then used a stick

to tap their chest (out of sight) while tapping

underneath the location of the cameras. The felt

tapping was either synchronous with the visual

jabbing movements towards a point beneath the

cameras, or asynchronous. In the synchronous

condition subjects reported a strong illusion of

being behind their physical bodies as judged

by the questionnaire responses, for example ‘I

experienced that I was located at some distance

behind the visual image of myself, almost as if I

were looking at someone else’ (Supplementary

Figure 1, Ehrsson, 2007). People also experienced

that the scientist was standing in front

of them, i.e. there had been a change in the perceived

self- location. This fi nding was reinforced

by skin conductance responses that correlated

with an attack on their ‘phantom body’ location

in the synchronous but not in the asynchronous

condition. Thus this is evidence that the sense

of one’s body place can be dislocated to a position

which is different from the body’s veridical

position, and is therefore indirect evidence for

the idea that a virtual body might become felt

as one’s own.

More direct evidence has come from Petkova

and Ehrsson (2008), who employed cameras

attached to the head of a manikin that was looking

down on the manikin’s body. Again the videosignals

from these cameras were presented in real

time to the participant who was wearing a set of

head-mounted displays. Now looking down at

themselves subjects would see the manikin body

in a similar location where their own body would

be. Synchronous tapping on the stomach of the

manikin and the real stomach resulted in a strong

illusion of ownership of the entire body (as evidenced

by the questionnaire responses), which

was again confi rmed by augmented skin conductance

responses in correspondence to physical

attacks on different body parts of the manikin in

the synchronous but not in the asynchronous tapping

condition. This suggests that entire bodies

can be owned and that ownership of one stimulated

body part automatically enhance ownership

of other seen parts of the body.

A similar full body experiment was reported by

Lenggenhager et al. (2007). In the critical experiment

the participants looked at a body presented

a few meters in front of their selves through a

head-mounted display. Thus the participants saw

the back of the body, and when the experimenter

stroked them on their back, they would see this

stroking on the back at the distant body location.

This resulted in the reported sense of being at the

location of the body in front, and a version of the

proprioceptive drift measure provided a further

verifi cation. In this case there was a reported projection

of the sense of touch and self-localisation to

a body observed from a third-person perspective,

which is different from the experiments by Ehrsson

(2007) and Petkova and Ehrsson (2008) where the

owned artifi cial body was always perceived from

fi rst person perspective. To what extent the reported

self attribution in these two set of experiments

engage common or different perceptual mechanisms

is still an open question (see Science E-letters

for further discussion2). However, Lenggenhager

et al. (2009) recently reported an experiment that

directly compared the two paradigms and found

evidence to suggest that self-localisation is strongly

infl uenced by where the correlated visual–tactile

event is seen to occur.

DISCUSSION

The experiments reviewed in this article strongly

suggest that virtual limbs and bodies in virtual

reality could be owned by participants just as

rubber hands can be perceived as part of one’s

body in physical reality. Furthermore, the experimental

fi ndings suggest that ownership of virtual

limbs and bodies may engage the same perceptual,

emotional, and motor processes that make

us feel that we own our biological bodies. To what

extent this ‘virtual body illusion’ works when the

movements of the simulated body are controlled

directly by the participants thoughts, via BCI

control, is an important emerging area for future

experiments.

The visual realism of the virtual arm and

the arm’s environment does not seem to play

an important role for the induction of the illusion.

In our laboratory we have seen the illusion

work well with many different types of simulated

hands. This is similar to the traditional rubber

hand illusion which does not seem to depend on

the physical similarity between the rubber hand

and the person’s real hand – anecdotal observations;

see also (Longo et al., 2009). Further,

adding realism to the simulation by adding shadows

(Figure 1C) did not enhance the ownership

illusion (Perez-Marcos et al., 2007), unpublished

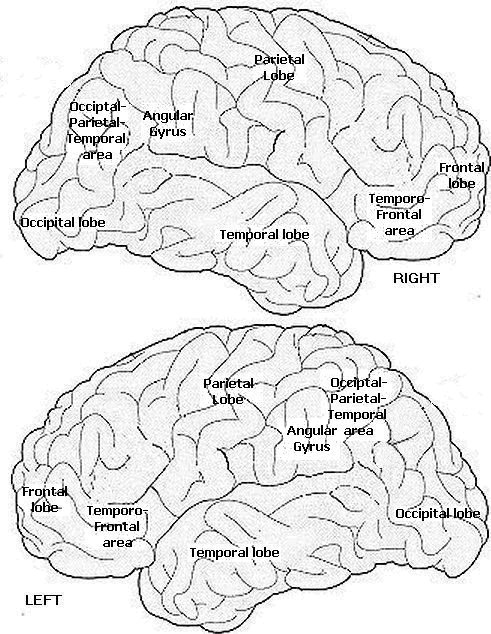

results. These observations would fi t with physiological

properties of cells in premotor and

intraparietal cortices which are involved in the

fast localisation of limbs in space (Graziano,

1999; Graziano et al., 2000), but not involved

in visual object recognition and the fi ne analysis

of visual scenes. This realisation is important for

the development of virtual reality applications

because it means that one is not restricted to

ultra-realistic simulations and high defi nition

visual displays.

Virtual reality additionally provides power

to investigate these illusions at the whole body

level. In Figure 2 we show an example of what

can be seen when someone wears a tracked

head-mounted display, looks down, and sees a

virtual body in place of their real one. The very

act of looking down, changing head orientation

in order to gaze in a certain direction, with the

visual images changing as they would in reality

is already a powerful clue that you are located

in the virtual place that you perceive. We argue

elsewhere that multisensory contingencies that

correspond approximately to those employed

to perceive physical reality provide a necessary

condition for the illusion of being in the virtual

place (Slater 2009). Now imagine that you move,

and the virtual body moves in correspondence

with your movements, or you see something

touch your virtual body and you feel the touch

in the corresponding location in your real body.

These events add signifi cantly to the reality of

what is being perceived – not only are you in the

virtual place, but you also have the illusion that

the events occurring are real – therefore increasing

the likelihood that you would respond realistically

to virtual events and situations

FUTURE PERSPECTIVE

BCI control of owned virtual bodies will probably

have many important clinical and industrial

applications, for example in the development of

the next-generation BCI applications for totally

paralysed individuals. These people would in

principle be able to control and own a virtual

body and engage in interactions in simulated

environments. The fi rst attempt in this direction

(Experiment 3; Perez-Marcos et al., 2009)

suggests that this dream might have a chance of

success. When the motor imagery resulted in the

expected opening and closing of the virtual hand

then the ownership illusion and motor recruitment

occurred (but not proprioceptive drift).

The fundamental question here is whether a

correlation between intentions of movement

and pure visual feedback, in the absence of any

tactile or proprioceptive feedback, is suffi cient

to induce the rubber hand illusion and produce

recalibration of visual, tactile and proprioceptive

representations. If so, this would demonstrate

that multisensory recalibration could occur as

a result of internal simulation of action and its

sensory consequences. This issue is not fully

settled yet, given that in Perez-Marcos et al. the

illusion of ownership did not go along with proprioceptive

drift. Future experiments whereby the

participants can execute different types of virtual

hand movements via so called ‘un-cued’ BCI may

be a promising avenue for future experiments

of this sort